Hello,

I’m revisiting a project similar to one I discussed in this 2018 post, where I had successfully generated a decent point cloud using low-precision Ground Control Points (GCPs). Back then, ODM was using PMVS instead of the powerful SMVS algorithm.

I’m now working on a similar process with aerial photos from the same flight (60% forward overlap, 20-30% side overlap), but covering a different area. I’m using GNSS data generated from double and triple frequency DIY receivers, with millimetric error. The photos are 100 MP, high quality, with a GSD of about 50 cm. To adapt to ODM’s workflow, I’ve created multiple shell scripts and even developed a hybrid workflow I hope to publish soon. This involves using QGIS and GCPEditorPro (a fantastic tool!) for finding common points between the present (e.g., Google Satellite reference images) and the past (in my case, the year 2000). My scripts perform mass tasks like cropping photos, pre-orienting them based on the flight line (useful for using them in GCPEditorPro), stamping EXIF data, among others. This work is promising as it unlocks the potential of underutilized historical photographs.

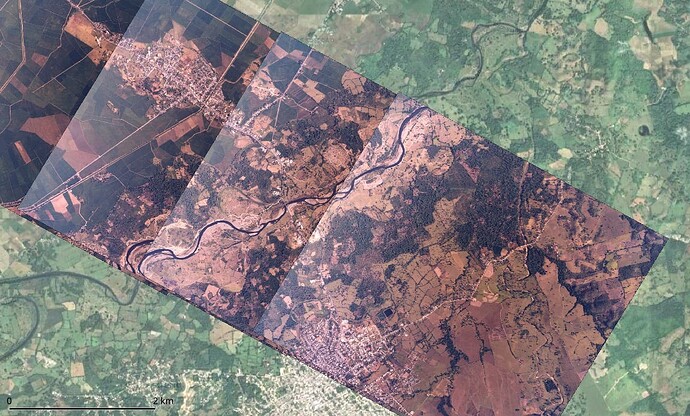

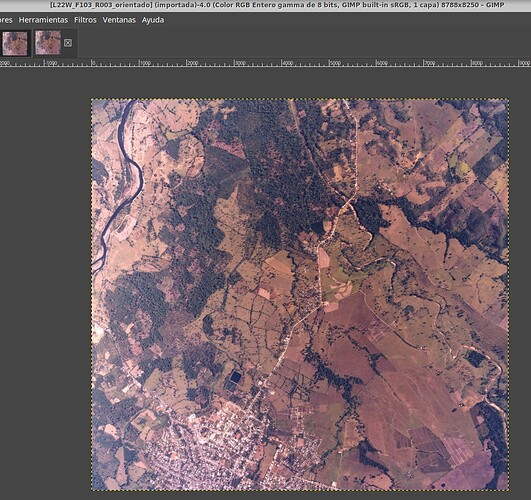

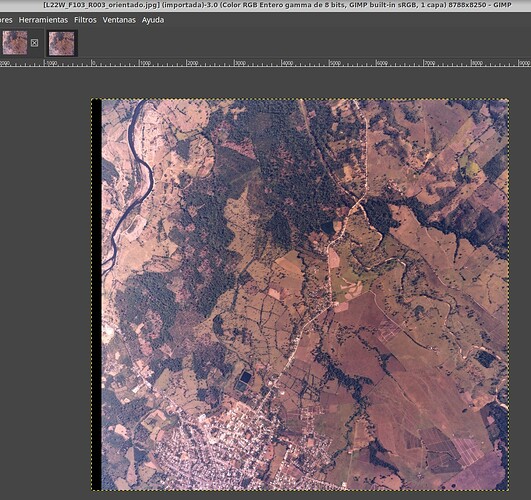

However, I’m facing some issues with the results. As seen below, the orthomosaic is literally perfect.

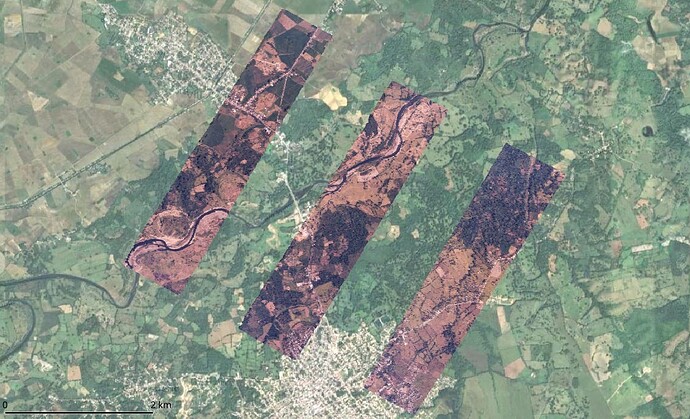

The original photos of the example displayed above, slightly cropped to remove the frame, rotated, and geolocated, appear like this:

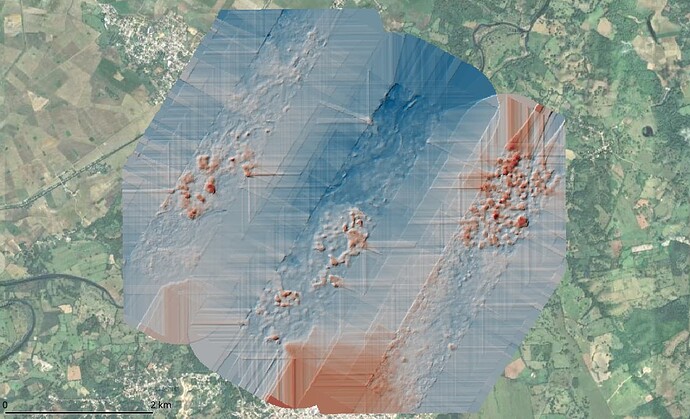

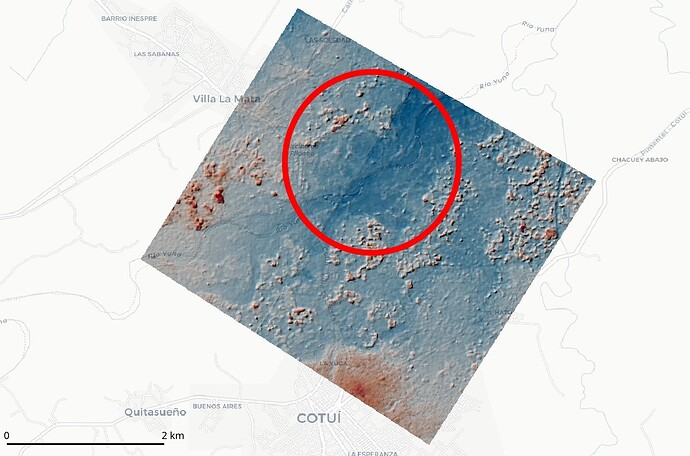

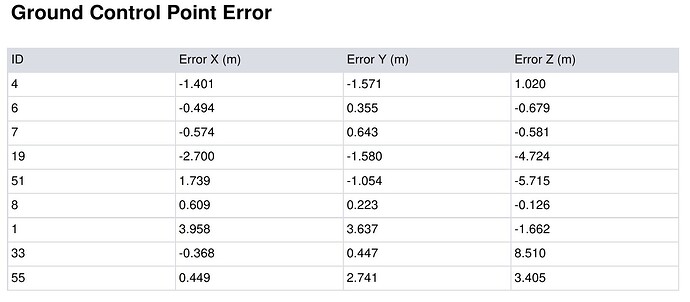

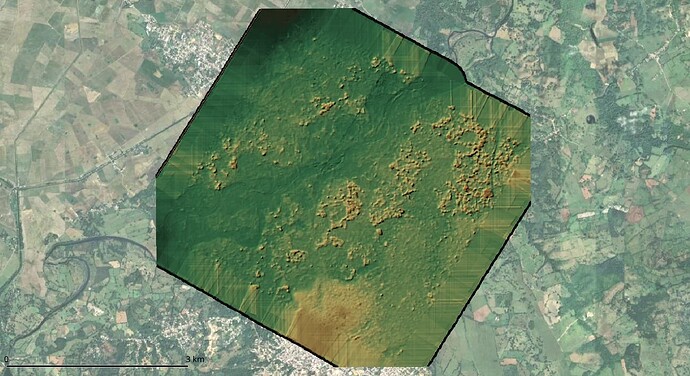

However, the point cloud, and consequently the DSM, is only generated in areas with triple photo overlap, and the accuracy isn’t as expected (detecting up to 2 meters error in vertical components in some cases). See the results below for standard configuration:

I’ve processed these captures with default options for generating DSM+DTM. In addition, I’ve tried various settings, even without resizing, but with little success. For instance, I’ve experimented with nearly all options mentioned in this post and this one, yet the results don’t improve much, still showing gaps in the point cloud. And when I say nearly all options, I mean that in “pc-quality” the highest I could go was “high”, as “ultra” took over 36 hours on a PC with an 8-thread processor and 64 GB RAM, and I had to stop it. I’m aware that areas with only dual photo overlap are challenging for the current ODM engine, but I’d like to leverage the potential of SMVS or, alternatively, return to PMVS (I recall modifying the depthmap_min_consistent_views option in the config file from 3 to 2).

What are my options with the latest version of ODM using SMVS? As @smathermather mentioned in the 2018 post, PMVS was replaced with SMVS. I understand that executing workflows with legacy PMVS is not possible now, but it was a tremendously useful functionality of ODM for processing historical aerial photos to create dense and precise point clouds. Another question is whether using cloud hardware (e.g., 256 GB RAM and 1 TB of virtual memory) would allow me to apply settings like “pc-quality: ultra” for better results. Should I consider using the old version of ODM or perhaps NodeMicMac?

Any suggestions or insights would be greatly appreciated.

José