Not strictly related to the question in the subject line, but since I’m using ORB matching, and watching the console log in a big task…

From my current log:

2021-11-12 09:15:57,817 INFO: Reading data for image DJI_0498_7.JPG (queue-size=1

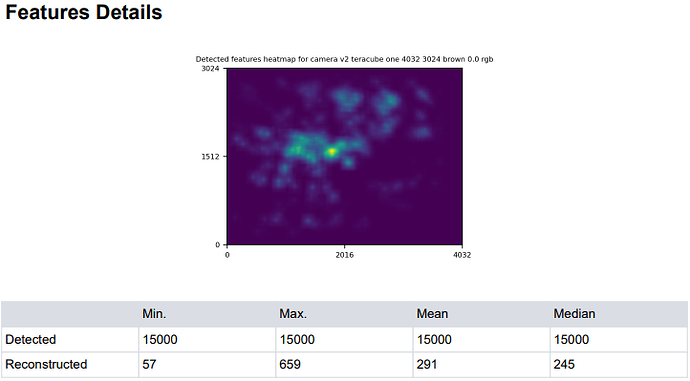

2021-11-12 09:15:57,818 DEBUG: Found 15000 points in 0.11483049392700195s

2021-11-12 09:15:57,830 DEBUG: Found 15000 points in 0.09299826622009277s

2021-11-12 09:15:58,007 INFO: Extracting ROOT_ORB features for image DJI_0498_7.JPG

2021-11-12 09:15:57,994 DEBUG: Found 15000 points in 0.09551215171813965s

2021-11-12 09:15:58,157 DEBUG: No segmentation for DJI_0498_7.JPG, no features masked.

2021-11-12 09:30:43,651 DEBUG: No segmentation for DJI_0498_7.JPG, no features masked.

2021-11-12 09:41:53,095 DEBUG: No segmentation for DJI_0498_7.JPG, no features masked.

2021-11-12 09:45:51,207 DEBUG: No segmentation for DJI_0498_7.JPG, no features masked

2021-11-12 10:21:35,958 DEBUG: No segmentation for DJI_0498_7.JPG, no features masked.

2021-11-12 10:35:20,695 DEBUG: No segmentation for DJI_0498_7.JPG, no features masked.

2021-11-12 11:35:07,855 DEBUG: No segmentation for DJI_0498_7.JPG, no features masked.

etc, in the log for all the matched pairs.

Does this represent any duplication of processing effort for this (and all other) images in this dataset, or is it just noting a previously determined ‘no features masked’ before attempting to match with another neighbour?