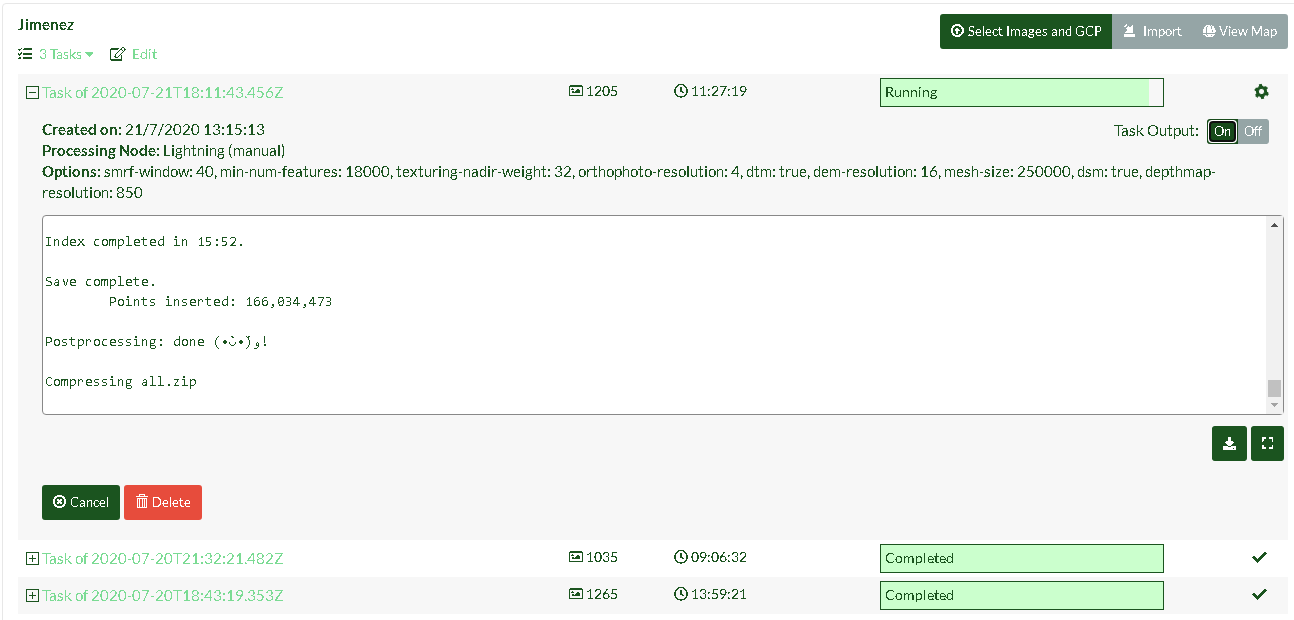

I have had pretty good luck with ODM for a few months on my server but recently I have had a number of failed tasks because ODM appears to hang at the ‘Compressing all.zip’ stage. I have also used the Lightning network and had similar results. This is happening whenever I add GCPs to the dataset to be processed.

I am using WebODM through Docker on a Dell R710 and only recently started having issues after an update about a week ago. I can see a Celery job running when it gets stuck but even after waiting several hours it fails out.

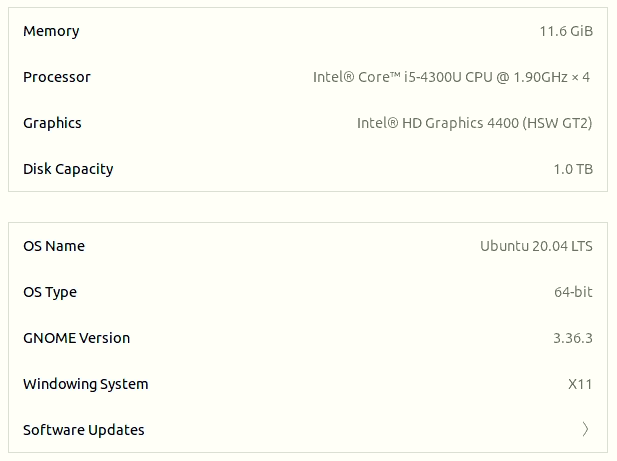

The test images I am using have been only about 120 images or less. My server has approximately 1TB of storage remaining and 80 GB of RAM available for ODM to abuse as necessary.

I am also not sure if it’s related but the elapsed processing timer also gets stuck when it reaches this stage. If I refresh the page when it reaches the compressing stage, the timer resets to the elapsed time at which it began the compressing stage.

Terminal output:

conversion finished

55,569,731 points were processed and 55,569,731 points ( 100% ) were written to the output.

duration: 383.984s

Postprocessing: done (•̀ᴗ•́)و!

Compressing all.zip

I have tried updating everything a couple times and still have had no luck. My next step will probably be to remove everything and reinstall from scratch but I don’t know how effective that will be. I will upload the dataset to a drive when I get a chance.

Here are some related threads which I have looked at but not found an effective solution from: