Hey there ODM community!

I’ve been a busy reader the last few days! First off - this community is really neat, thank you all for your help and interesting posts.

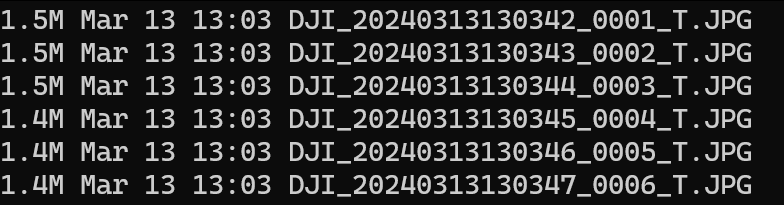

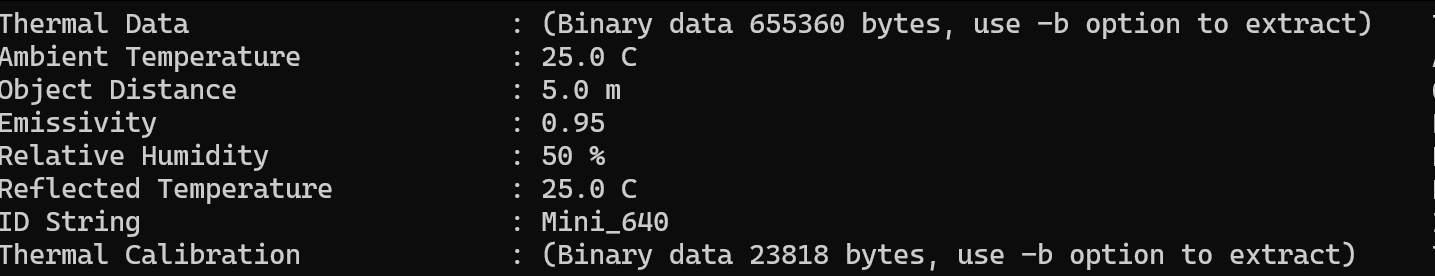

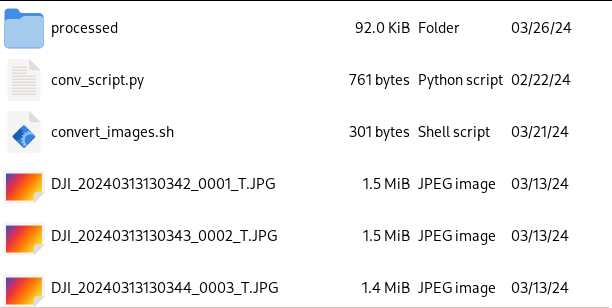

My use case for ODM is thermal mapping of solar power plants with a DJI Maivc 3T for inspection. Capturing thermal images with said drone is super easy, converting the proprietary ![]() thermal data for radiometric calibration sadly is not. Got it working though, if anyone is interested I can share how.

thermal data for radiometric calibration sadly is not. Got it working though, if anyone is interested I can share how.

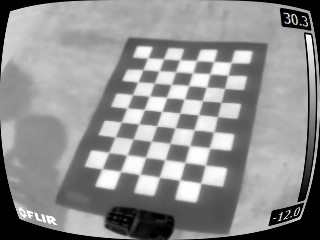

The thermal images from the 3T are kinda distorted (fisheye-ish), sample (not converted to tif yet, rJPEG):

Also see that beautiful hot spot I marked there. This is why thermal inspection is really important.

For normal RGB-images the distortion can be removed by lens calibration and similar means, for thermal it works the same but I am kinda struggeling with the camera parameters ODM automatically chooses. They are always different, even for the same project when rerun. I’d love to take that factor out of the processing of the orthophotos.

Do you think it would be a smart idea to manually calibrate the thermal lens? I thought about creating a checkerboard pattern with alternating black and white paint in the sun or something and using adobe lens profile creator. Does it actually make sense manually calibrating the lens or should I watch other parameters?

Also - any help for high detail thermal orthophoto creation would be really appreciated!

Have a good one!