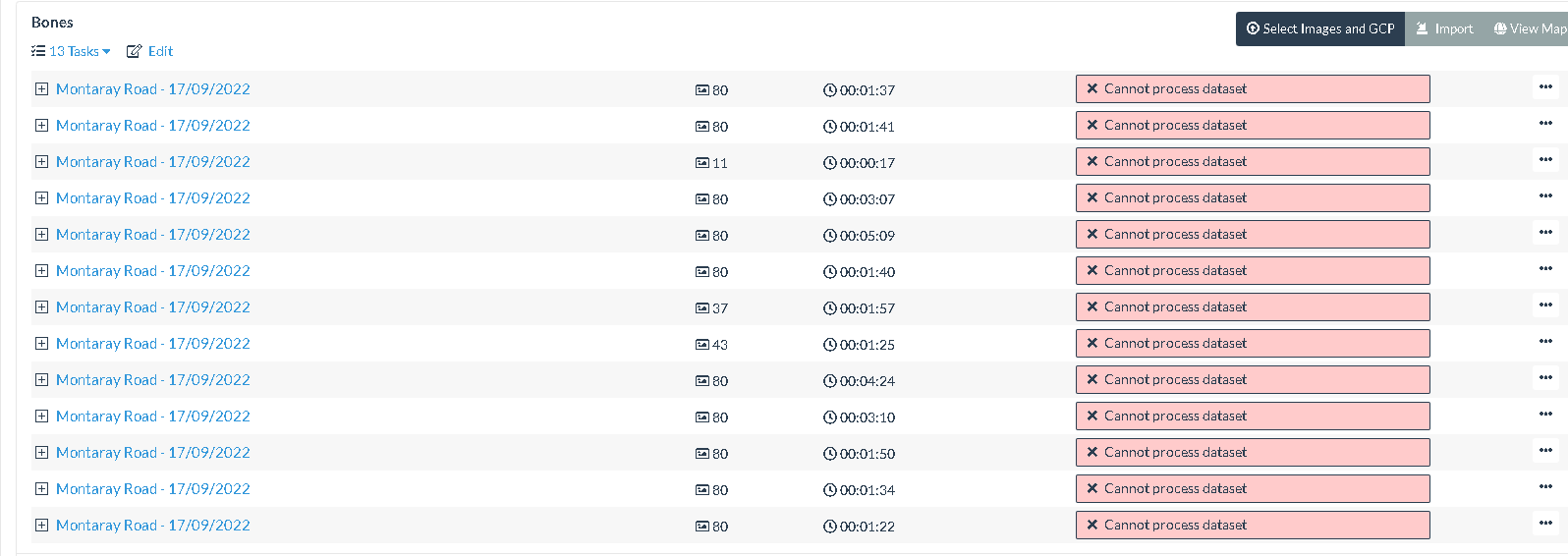

I’m attempting to produce a 3D model of a vertebrae with 80 images, but keep running into a failure to process. There is good focus and I have removed images with saturated areas to ensure plenty of features are detectable in all images.

I’ve tried with and without GPU feature extraction, SIFT and Bruteforce, but not having any success so far.

I’ll try bumping up feature numbers, but apart from that, any ideas on what the issue might be?

Auto-boundary: true, feature-quality: ultra, matcher-type: bruteforce, mesh-octree-depth: 13, mesh-size: 500000, min-num-features: 12000, orthophoto-resolution: 0.05, pc-filter: 5, pc-quality: ultra, resize-to: -1, skip-orthophoto: true, use-3dmesh: true

End of the console log -

2022-09-17 15:07:32,762 DEBUG: No segmentation for 20220917_144102.jpg, no features masked.

2022-09-17 15:07:33,094 DEBUG: No segmentation for 20220917_144109.jpg, no features masked.

[INFO] running “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\bin\opensfm” match_features “D:\WebODM\resources\app\apps\NodeODM\data\13ce8fd6-2fa1-4f8c-ae62-fa77028bcb44\opensfm”

Traceback (most recent call last):

File “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\bin\opensfm_main.py”, line 25, in

commands.command_runner(

File “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\opensfm\commands\command_runner.py”, line 38, in command_runner

command.run(data, args)

File “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\opensfm\commands\command.py”, line 13, in run

self.run_impl(data, args)

File “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\opensfm\commands\match_features.py”, line 13, in run_impl

match_features.run_dataset(dataset)

File “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\opensfm\actions\match_features.py”, line 14, in run_dataset

pairs_matches, preport = matching.match_images(data, {}, images, images)

File “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\opensfm\matching.py”, line 46, in match_images

pairs, preport = pairs_selection.match_candidates_from_metadata(

File “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\opensfm\pairs_selection.py”, line 630, in match_candidates_from_metadata

g = match_candidates_by_graph(

File “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\opensfm\pairs_selection.py”, line 253, in match_candidates_by_graph

triangles = spatial.Delaunay(points).simplices

File “qhull.pyx”, line 1840, in scipy.spatial.qhull.Delaunay.init

File “qhull.pyx”, line 356, in scipy.spatial.qhull._Qhull.init

scipy.spatial.qhull.QhullError: QH6154 Qhull precision error: Initial simplex is flat (facet 1 is coplanar with the interior point)

While executing: | qhull d Qz Qbb Qt Qc Q12

Options selected for Qhull 2019.1.r 2019/06/21:

run-id 674689332 delaunay Qz-infinity-point Qbbound-last Qtriangulate

Qcoplanar-keep Q12-allow-wide _pre-merge _zero-centrum Qinterior-keep

Pgood _max-width 4.4e-16 Error-roundoff 1.2e-15 _one-merge 8.2e-15

Visible-distance 2.4e-15 U-max-coplanar 2.4e-15 Width-outside 4.7e-15

_wide-facet 1.4e-14 _maxoutside 9.4e-15

precision problems (corrected unless ‘Q0’ or an error)

3 degenerate hyperplanes recomputed with gaussian elimination

3 nearly singular or axis-parallel hyperplanes

3 zero divisors during back substitute

3 zero divisors during gaussian elimination

The input to qhull appears to be less than 3 dimensional, or a

computation has overflowed.

Qhull could not construct a clearly convex simplex from points:

- p2(v4): 0.64 0.85 0

- p1(v3): 0.64 0.85 0

- p80(v2): 0.64 0.85 0.85

- p0(v1): 0.64 0.85 0

The center point is coplanar with a facet, or a vertex is coplanar

with a neighboring facet. The maximum round off error for

computing distances is 1.2e-15. The center point, facets and distances

to the center point are as follows:

center point 0.6446 0.848 0.212

facet p1 p80 p0 distance= 0

facet p2 p80 p0 distance= 0

facet p2 p1 p0 distance= -0.12

facet p2 p1 p80 distance= 0

These points either have a maximum or minimum x-coordinate, or

they maximize the determinant for k coordinates. Trial points

are first selected from points that maximize a coordinate.

The min and max coordinates for each dimension are:

0: 0.6446 0.6446 difference= 4.441e-16

1: 0.848 0.848 difference= 0

2: 0 0.848 difference= 0.848

If the input should be full dimensional, you have several options that

may determine an initial simplex:

- use ‘QJ’ to joggle the input and make it full dimensional

- use ‘QbB’ to scale the points to the unit cube

- use ‘QR0’ to randomly rotate the input for different maximum points

- use ‘Qs’ to search all points for the initial simplex

- use ‘En’ to specify a maximum roundoff error less than 1.2e-15.

- trace execution with ‘T3’ to see the determinant for each point.

If the input is lower dimensional:

- use ‘QJ’ to joggle the input and make it full dimensional

- use ‘Qbk:0Bk:0’ to delete coordinate k from the input. You should

pick the coordinate with the least range. The hull will have the

correct topology. - determine the flat containing the points, rotate the points

into a coordinate plane, and delete the other coordinates. - add one or more points to make the input full dimensional.

[INFO] running “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\bin\opensfm” create_tracks “D:\WebODM\resources\app\apps\NodeODM\data\13ce8fd6-2fa1-4f8c-ae62-fa77028bcb44\opensfm”

2022-09-17 15:07:35,387 INFO: reading features

2022-09-17 15:07:38,623 DEBUG: Merging features onto tracks

2022-09-17 15:07:38,623 DEBUG: Good tracks: 0

[INFO] running “D:\WebODM\resources\app\apps\ODM\SuperBuild\install\bin\opensfm\bin\opensfm” reconstruct “D:\WebODM\resources\app\apps\NodeODM\data\13ce8fd6-2fa1-4f8c-ae62-fa77028bcb44\opensfm”

2022-09-17 15:07:39,525 INFO: Starting incremental reconstruction

2022-09-17 15:07:39,532 INFO: 0 partial reconstructions in total.

[ERROR] The program could not process this dataset using the current settings. Check that the images have enough overlap, that there are enough recognizable features and that the images are in focus. You could also try to increase the --min-num-features parameter. The program will now exit.

I’ve tried a number of dataset sizes and numerous different parameter sets, but all fail with the same sort of error.